New images on Planet Four: Terrains

Image Credit: Planet Four tile derived from a CTX image – NASA/JPL-Caltech/Malin Space Science Systems

We’ve uploaded a new batch of CTX data onto Planet Four: Terrains. These new images have never been reviewed by human eyes in such detail before. With your help, Planet Four: Terrains aims to map where different types of Martian terrains occur in images taken of the South Pole by the Context Camera aboard Mars Reconnaissance Orbiter. We will use the locations you identify to find new areas of interest to serve as targets for detailed study with the HiRISE camera, the highest resolution camera ever sent to a planet! These high resolution images in turn will end up on the original Planet Four to study the fan and blotch cycle in these new areas.

Who knows what interesting finds might be waiting in these new images. Explore the South Pole of the Red Planet today and help identify terrains at http://terrains.planetfour.org

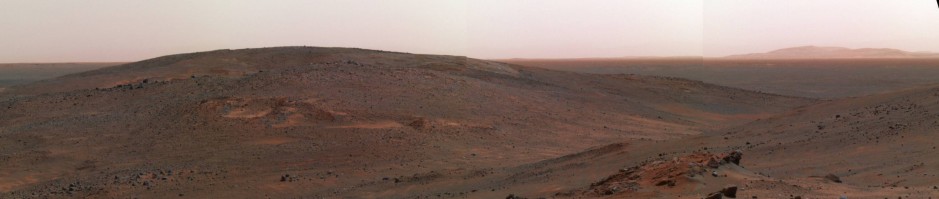

Mars and the Planetary Gang in the Early Morning Sky

Wake up early and view our planetary neighbors in all their glory. Starting this weekend you’ll be able to find Mars and four other planets from our Solar System visible in the early morning sky. In addition to the Red Planet, this planetary alignment includes Mercury, Venus, Jupiter, and Saturn. Some of the planets will continue be visible for over two or three weeks, but the best time to see all five is from Saturday, January 23 through the first week of February.

Below is a guide to help direct you to the right spot. Just before dawn (about 45 minutes before) while the sky is is still dark will be the best time to look.

Credit: NASA/JPL-Caltech

Most of the planets are bright compared to stars in the sky so you should be able to glimpse them without the need of a telescope or binoculars, though you’ll likely need binoculars to spot tiny Mercury. If you’re having trouble identifying the planets from the backgrounds stars in the patch of sky, this (below) might help.

Mars should stand out as it will have a reddish tint thanks to all the iron oxide dust (or maybe better to say rusty dust) that covers it surface and swirls in its atmosphere. The bright star Spica will be in the middle between Jupiter and Mars, but our own Moon will also join this cosmic display, so if you’re having a hard time finding the planets, then try on the morning around February 1st. That’s when our Moon will be visible near Mars.

You can find more details on how to spot this early morning show here and here. If you do spot Mars, take a moment to think about the fact that you’re viewing a world that you can help better understand how the atmosphere/climate of this distant world works. You can explore Mars and help map seasonal fans on the South Pole of Mars with the Zooniverse’s Planet Four project ( http://www.planetfour.org), and if you do get a glimpse of Mars, post your photos in the comments section and we’ll post them here in a future blog post.

What are the pancakes in depressions?

You might have images like those below while classifying on Planet Four Terrains.

Some people on Talk have started labeling them #pancakesindepressions . I didn’t know what was causing this terrain, so I showed these to the rest of the Planet Four: Terrains team. They think this this is a variation on the same processes that create the swiss cheese terrain. That the sediment layers have varying amounts of ice that get eroded at different rates, creating then layered surface.

I’ve post an example of the swiss cheese terrain below for reference:

Example of swiss cheese terrain

The swiss cheese terrain (see above picture), is compromised of a series of small edged pits that are caused by the uneven deposition and sublimation of carbon dioxide ice. The pancakes in depressions are a separate feature, so they shouldn’t be marked as swiss cheese terrain in the main classification interface, but if you see more images like the examples above, do mark them on talk with #pancakesindepressions

Status of analysis pipeline

Dear Citizen Scientists!

Long time no hear from me, sorry guys! Last year I was struggling to manage 4 projects in parallel, but at least one of them is finally funded PlanetFour activity (since last August), yeah!

I’m now down to three projects, with another one almost done, leaving me more time on PlanetFour. Things are progressing slowly, but steadily. To recap, here’s where we are:

We have identified 5 major software pipelines that are required for the full analysis of the PlanetFour data, starting from your markings to results that are on a level that they can be used in a publication or shown at a conference. Four of these pipelines are basically done and stable, with the fifth one existing as a manual prototype but not yet put into a stable chain of code that can run from beginning to end. Figure 1 shows the first four pipelines that are finished.

Figure 1: The current manifestation of the PlanetFour analysis pipeline.

The need of the fifth pipeline was only discovered recently, when we tried to create the first science plots from PlanetFour data: Some of the HiRISE input data that we use is of such high resolution (almost factor 2 better than the next level down) that the Citizen scientists discover a lot more detail than in the other data. This led to an un-natural jump of marked objects over time, making us wonder for a bit why so late in the polar summer a sudden increase in activity would occur. Until I checked the binning mode of the HiRISE data that was used for those markings. All of the ‘funny data’ were taken in the highest resolution possible (while others for data-transport margins are binned down by a factor of 2 or 4).

So, we now understand that we need to filter and/or sort for the imaging mode that HiRISE was in when the data was taken, which is not a big deal, it just needs to be implemented in a stable fashion instead of trial-and-error code in a Jupyter notebook.

Okay, the other thing that is new: For months we were clustering your markings together using only the x,y base coordinates of fans and the center x,y coordinates of blotches. This simplest approach worked already quite well, but a closer review of the acceptance and rejection rates revealed that some of the more ‘artistically’-motivated markings would survive this reduction scheme and create final average objects that would have seemed to come from nowhere at a quick glance. Take Figure 2 for example:

Figure 2: Process chain for one PlanetFour image_id. Upper left: The HiRISE tile as presented to Citizen scientist. Upper middle and right: The raw fan and blotch markings as created by YOU! 😉 Lower right and middle: The reduced cluster average markings. Lower left: After fnotching and cutting on 50% certainty, the resulting end products.

One can see that the lower left image, the end of the first 3 pipelines, contains some markings that seem to come out of nowhere. They are in fact created by an artistic set of fans visible in the upper middle plot, where three fan markings are put where no visible ground features are, and because the base points of these 3 fans are nicely touching each other, they survive the clustering reduction, as the algorithm thinks it is a group of valid markings. Or, better said, it *thought* so. As I taught it better now, and it includes the direction as a criterion for the clustering as well. As Figure 3 shows, this helps cleaning up the magical fans out of nowhere.

One can see, there’s still some double-blotches visible, but another loop over those remaining ones, checking for close-ness to each other will unify those as well.

One last thing I want to mention is “fnotching”, as some of you might wonder what that actually means. In difficult-to-read terrain or lightning, or when the features on the ground are kinda hard to distinguish between fans and blotches, it happens that the same ground object is marked both as fan and blotch, and both often enough to survive the clustering. We call these chimera objects “fnotches”, glued together from FaNs and blOTCHES. 😉 What we do is looping over objects that survive the clustering, and if a fan and blotch are close to each other, we store how many Citizens have voted for both, create a statistical weight out of that (the ‘fnotch’-value) and store that, too, with the fnotch object. Then, at a later point, depending on the demands of certainty, we can ‘cut’ on that value, and for example say that we only consider something as a fan if 75% of all Citizens that marked this object have marked it as a fan. That way we can create final object catalogs depending on the science project that the catalog is being used for.

We have just submitted another conference abstract with the most recent updates to the 47th Lunar and Planetary Science conference, and I seriously, seriously want our paper to be submitted until then, so that you all can see what wonderful stuff we created from all your hard work!

Wish us luck and have a Happy 2016 everyone! Or, as the star of one of my favorite video blogs, HealthCare Triage, keeps saying: To the research!

Michael

Happy 3rd Birthday Planet Four!

Today marks the third anniversary of Planet Four’s launch. We couldn’t do this without each and every volunteer who has contributed to the project over the past 3 years. To each and every one of you, thank you!

We made this birthday mosaic of Mars (a full glob image taken by one the Viking spacecraft) assembled out of ~16000 Planet Four tiles. If you’re interested in making your own, we used AndreaMosaic

Generated with Andrew Mosaic – http://www.andreaplanet.com/andreamosaic/download/ Original Image Credit: NASA/Viking Tiles – Image Credit: NASA/JPL/University of Arizona

You can download the full resolution image (~86Mb) here. A lower resolution version (~8 Mb) can be found here.

If you have a spare moment, classify an image or the red planet at http://www.planetfour.org. Onward to year 4!