What have your fan markings told us about the conditions at “Potsdam”?

Today we have a guest post by Tim Michaels. Tim is a research scientist at the SETI Institute who studies how the weather and climate of other worlds affects their surface features.

Have you ever wondered what the Planet Four science team has been able to discover from the many fan measurements that you all provided at the Potsdam fan site? Read on! This is a small part of our new paper in press (Portyankina et al.) at the Planetary Science Journal.

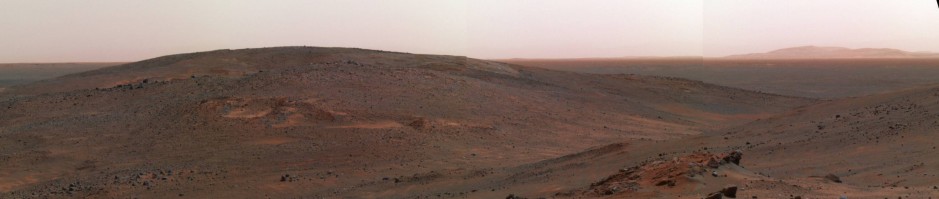

As shown on the topographic map above, the Potsdam site (81.68 S, 66.3 E; the red dot) is located on a broad equator-facing slope at the edge of the South Polar Layered Deposits (or SPLD). The SPLD are a huge layered pile of dirty water ice, dust, sand, and carbon dioxide ice (or “dry ice”) near the south pole of Mars — the pile is kilometers high! They are thought to be the result of many thousands (perhaps even millions) of Mars-years of shifting climate cycles. See the 10 December 2020 blog post below for more info. The black arrows represent the overall fan directions marked by you at Potsdam (for Mars Years 29 and 30).

Based on the topography and some knowledge about how the Mars atmosphere behaves, we can come up with a hypothesis: The fans are pointed in the same directions as katabatic flows would probably be — that is, cold winds rushing down from the higher elevation portions of the SPLD to the south (that is, nearer the pole), twisting toward the left (in this case, toward the west) because of the Coriolis effect. Note that katabatic flows (on Mars and Earth) are often strongest at night.

So, does the state-of-the-art computer climate model (MRAMS; Mars Regional Atmospheric Modeling System) that we are using agree with our hypothesis above? How well does the model output match with the fan measurements that all of you provided?

In the plot below there 3 early-spring seasonal windows shown — the first, Ls 180, is at the beginning of southern hemisphere spring. For each season panel, downwind direction (the direction in degrees that the wind is blowing toward; 90 = E, 270 = west) is on the vertical axis and wind speed is on the other axis (in units of meters per second). Fan directions from your fan markings are shown as horizontal red lines, while the vertical red dashed lines indicate conservative estimates of wind speeds derived from your fan markings. MRAMS (computer model) winds at 5 m above ground level (AGL) are shown as black dots, and winds at 91 m AGL (about the height of the CO2 gas jets that probably create the dark fans) are shown as cyan dots. A single Mars-day (or sol) of MRAMS winds is shown for each season.

The plot shows that MRAMS wind directions at Potsdam agree with (or are quite close to) P4 wind directions when the MRAMS wind speed is highest. The MRAMS output also tells us that these winds blow at night, and that the winds that blow more toward the east (90) and south (180) are daytime winds. This *does* strongly support our hypothesis that the fans at Potsdam are directed by katabatic winds! An added bonus is that most MRAMS wind speeds matching the P4 directions also are stronger than the conservative wind speed estimates derived from P4 fan markings, as we would expect.

Is that all that is possible to understand about Potsdam using your fan markings? No! We would like to know at what season (Ls) the fans stop being active, which would help us better confirm how they form. There are also clearly big differences in fan directions from year to year that may give us clues to how Mars’ atmosphere works. Please help us answer these questions (and others) at Potsdam and at the other south polar fan sites by continuing to mark fans on actual high-resolution spacecraft imagery using this platform!

You have a catalog of seasonal fans and blotches… Now what?

Today, I wanted to share a bit of the analysis we’re working on for Planet Four. Taking the Planet Four fan and blotch catalog from Season 1 and 2 of the HiRISE monitoring campaign, we’re now looking at what the average/dominant wind directions, derived for your classifications is telling us about the Martian south polar surface winds.

I wanted to show an example of what the science team is doing this. Tim Michaels has joined the science team and he’s an expert on climate modeling. We’re using the MRAMS (Mars Regional Atmospheric Modeling System) climate model/computer simulation to compare the fan directions to what direction is expected from the simulation. MRAMS is taking all the physics that we have about atmospheres and how we think these processes are working and computes what the atmosphere is doing and its conditions. We’re working on comparing the output of MRAMS to the wind directions we infer from the Planet Four fan directions.

Below is an example of one of the types of plots the team has been looking at. Here we show where the dominant fan direction is pointing in the full HiRISE frame from the Planet Four fan catalog. Think of this has telling you where the wind is headed. Each arrow represents a HiRISE observation image taken as part of the Spring/Summer monitoring season. The color of the arrows tell you which block of the Spring/Summer season the image was taken. For timekeeping on Mars, we use L_s, solar longitude, where Mars is located in in orbit around the Sun. L_s=180 is early Southern Spring. 220 is into early Southern Summer. We have 2 Mars Years as part of the current Planet Four catalog We plot the directions from each separately in the left and middle plot, and jointly all together in the right most plot. The left and middle plot show the topography that was used by the MRAMS model and the right most post shows the highest resolution topography measured by the Mars Global Surveyor’s Mars Orbiter Laser Altimeter.

Plots like this help the team look at the impact of topography and the structure of the local surface that might be contributing to how the wind blows. From this image we see that Giza is on the edge of an area where the elevation is dropping as we move more northward in latitude. Here we can see that the topography is likely playing a significant roll with the wind likely traveling from the highest elevations region (bottom of the plot) to the lower elevations. We’ll be able to compare with the detailed ouptut from the MRAMS simulation, but the topographic plots help us put the results from MRAMS in context. The simulation will tells us what direction it think the wind is blowing, but it won’t tell us necesarily why. These topographic plots help us add more explanation to the story.

An Update on Planet Four: Ridges

It’s been a busy summer for the Planet Four: Ridges science team. The project’s first research paper was submitted to the journal Icarus. A big thank you to all the volunteers and our active volunteers on Talk who have contributed lots of great polygonal ridge locations that went into the paper’s analysis. Below you’ll find a map showing the CTX images that were searched by Planet Four: Ridges volunteers using the main classification interface as part of the study.

The first step in this process is getting the referee reports back. The referees are researchers studying Mars who give independent feedback on the paper. Normally the identities of the referees are anonymous, and the author does not know who they are. The referees read the paper and give the editor their opinion on whether the paper is of sufficient quality to be published in the journal and give feedback on how the manuscript/work could be improved. The job of the referee is to point out areas that should be clarified in the paper and where more analysis needs to be done if needed before the paper can be accepted for publication in the journal.

We’ve recently received the feedback from the two anonymous referees. The referees see that there is merit in the Planet Four: Ridges catalog. Thye also gave a lot of great feedback on where we can improve the analysis and manuscript. We’re working on addressing the referee’s comments and taking on board their feedback. We’ll keep you posted as we move through the paper revision process. We’ll do some further analysis, reworking of the paper draft, and add some additional text. Once we’ve done that, we’ll write a response to the referee’s report outlining what was changed/added to the paper to address the points raised by the referees. Then we’ll resubmit the paper and send the response to the referees to the journal. The referees will read everything and send back further questions, concerns, and points that need clarification. We will post more details about the key results of the paper once the paper is accepted and published by the journal.

Research Experience for Undergraduates

There is a nice program for undergraduates here in the US that is called REU: Research Experience for Undergraduates.

Within this framework the Planet Four team here in Boulder, CO was fortunate to receive funding support by the United Arabic Emirates for one of these undergraduate positions for this summer.

Working with us was Shahad Badri, and the project we came up with for her was to look at blotch data from Planet Four. Our hypothesis was that it should be relatively straight forward and provide us with insights on jet eruption physics alone, because no wind was involved in depositing the jet deposits.

As usual, the reality turned out to be more complicated than our naive thinking, so we are still digesting the results of plotting blotch area and blotch eccentricities over the time of the Martian year (= Ls = Solar Longitude), but we wanted to show you the nice poster that Shahad came up with at the end of the summer project.

As in any exciting science project, the analysis created more questions than we had before. We will need to juxtapose these results with geometrical parameters of the fans, to see where we maybe have transitions between fans and blotches due to ground winds, shifting deposits around, making blotches look more fan-like.

Thanks to Shahad for her diligent work over this summer!

Building the NASA Citizen Science Community Meeting

Greeting from Tucson Arizona. I’m here with Planet Four PI Candy Hansen at the Building the NASA Citizen Science Community Meeting. The aim of the workshop is to bring together researchers engaged in successful citizen science projects, citizen science experts and platforms supporting citizen science projects (including representatives from Zooniverse), the NASA Science Mission Directorate, and researchers interested in applying citizen science to their research problems.

I gave an invited talk (my slides are included below) highlighting science results and the success of Planet Four and advertising Planet Four: Terrains and Planet Four: Ridges. It’s exciting that people in the planetary and astronomical community see Planet Four as a successful project. That is in large part due to the contributions of the Planet Four volunteer community. It was great to talk about Planet Four’s first paper and also mention the science team is working on three other publications right now based on the first fan and blotch catalog.

Can I Lend a Hand? Co-operation between AI and Martian Scientists

Today we have a guest post from Dr Eriita Jones and Professor Mark McDonnell. Eriita is a Planetary and Space Scientist, Research Fellow at the School of IT and Mathematical Sciences, University of South Australia, and an ECR member of the National Committee for Space and Radio Science. Her primary research areas are (i) the remote detection and characterisation of subsurface water environments on Mars and Earth, and (ii) quantifying the habitability of other planetary bodies. She is particularly interested in new computational data analysis techniques and in assessing the benefits of machine learning for space science. Mark McDonnell leads the Computational Learning Systems Laboratory at University of South Australia. He has published over 100 research articles in the fields of machine learning, computational neuroscience, and statistical physics. Mark has worked extensively with industry partners to deliver applied machine learning solutions in areas such as precision agriculture, recycling, and sports analytics. His research interests lie at the intersection of machine learning and neurobiological learning.

Artificial intelligence may get some bad press, but there are of course many tasks with which AI can provide tremendous benefit to human beings. One of the tasks that AI can be utilised for is called ‘image segmentation’, which is the process of automatically dividing an image into objects or categories so that every pixel in the image receives an associated label (e.g. car, dog, tree). This is essentially what the Planet Four citizen scientists are doing when they manually outline the boundaries to fans and blotches in polar springtime imagery from Mars. Just like a human being, in order to learn a new skill a machine needs to be taught (or ‘trained’) in the task it is being asked to perform. For state-of-the-art automated image segmentation, this training requires large amounts of data in the form of images with the categories of interest clearly labelled. In 2018, researchers at the Computational Learning Systems Laboratory at the University of South Australia in Adelaide, Australia, realised that large amounts of labelled imagery was exactly what the citizen scientists on the Planet Four project were generating. That was the start of a collaboration with the Planet Four Science Team. We wondered – could we teach an algorithm to automatically detect fans and blotches in Martian imagery? How well could a machine learn these complex features? And could the algorithm provide information which would assist the scientists in their study of these Martian phenomena?

The machine learning algorithms used here are examples of deep Convolutional Neural Networks (CNN’s) which generally perform very strongly on image segmentation problems. The algorithms are fed thousands of labelled fan and blotch images produced by the Planet 4 citizen scientists. After lots of exposure to what fans and blotches look like at different locations, years, solar longitudes, and resolutions, the algorithms become able to generalize from their experiences and apply their learning to new situations – in this case, unlabelled images that they have never seen before. In order to assess how well the machine learning techniques are performing, the algorithms are given a test. They are asked to predict where the boundaries of the fans and blotches are in some labelled images – but the algorithms are not shown the labels and have never seen those images before. We can then compare the machine’s predictions with the ‘correct answers’ – the manual labels drawn by citizen scientists. We compare with another method as well– a more traditional and less complex image classifier that does not employ machine learning. The figures below shows the output on a subset of one HiRISE image.

We are busily working on validating the output of the machine learning algorithms on a large number of images, but we can already see ways in which they can be very useful. Although the algorithms might not always find every fan or blotch in an image, they are very good at deciding whether there is at least one feature present. In other words, they do a good job at sorting out the images which have a fan or blotch, from those that have no fans or blotches at all. This is a very useful way of streamlining the presentation of images to the Planet Four Zooniverse platform – for example, instead of having to click through ‘featureless’ images the Planet FourTeam in future may wish to make sure that every image that appears will have a fan or blotch in it for labelling. Additionally, by automatically predicting the presence of fans and blotches in new images the algorithms provide early information on feature number and density that can allow the Planet Four team to be more selective in which images have the highest priority for manual labelling.

Could machine learning one day put citizen scientists out of a job? We don’t think this is very likely. The algorithms may eventually learn to perform very well on new images if those images are similar enough to ones they have seen before. But if they are shown an image that is very different (e.g with unusual lighting conditions, strange background terrain, or uncharacteristic fans and blotches), it is likely that the machine won’t be quite as good at segmentation as a well-trained human eye. So don’t worry citizen scientists, AI is just here to lend a hand – thanks for all the fabulous data, and stay turned for an exciting update in a few months!

A Sneak Peek at the Future of Planet Four

The science team is working on migrating Planet Four to the Zooniverse’s more modern project builder (or panoptes) platform. This is a slow process because things are different in how the newer Zooniverse platform displays images and also we want to take the lessons we’ve learned over the past 6 years and use it to make the web interface even better. We thought we’d share some screen shots from our work-in-progress prototype.

The next stage will be getting some images on the site and beta testing the changes we want to make and seeing how well these tweaks do compared to the current Planet Four website/classification interface. This might take a few months, but we’re working hard to have this ready before the end of the year.

In the meantime, we have new images on the original Planet Four website that we are hoping to get classified before the older Zooniverse platform that runs the current Planet Four site is officially retired. We’re trying to make the push in April to get these new images classified. If you can spare a few minutes to classify an image or two on the main Planet Four site, we’d appreciate it.

Exploring Interannual Variability in Manhattan: New Results and New Images on Planet Four

Today we have a post by Candy Hansen, principal investigator (PI) of Planet Four and Planet Four: Terrains. Candy also serves as the Deputy Principal Investigator for HiRISE (the camera providing the images of spiders, fans, and blotches seen on the original Planet Four project). Additionally she is a member of the science team for the Juno mission to Jupiter. She is responsible for the development and operation of JunoCam, an outreach camera that involves the public in planning images of Jupiter.

We have discovered something very interesting in the number and size of the fans that show up on the south polar seasonal cap every spring, that you are measuring. It turns out that in springs following both global and regional type A dust storms we see a lot more fans than normal for that time of year. This picture compares sub-images from 7 Martian years taken in “Manhattan” at solar longitude 195-197. The position of Mars in its orbit is the solar longitude (“Ls”), and southern spring begins at Ls 180 when the sun crosses the equator and heads south. Mars years 29, 30 and 33 have visibly more fans. There was a global dust storm in Mars Year (MY) 28 that started in early summer. Intense Type A storms, which are regional and centered at high southern latitudes, took place in MY29 and MY32. It looks like the spring after these storms have large numbers of seasonal fans.

Although the visual impression is powerful when these images are compared we can go beyond that now, thanks to the Planet Four fan catalog that your work has populated. We can quantify the differences. We used the MY29 an MY 30 catalog that we’ve published this year in our first paper, and also newly generated catalogs for Manhattan for MY 28, MY31, MY 32. Instead of just saying “there are a lot more fans” we can say “there are over twice as many fans” in MY29 and MY30 compared to MY28, 31 and 32. We do that by querying the catalog – an example is shown below. The plot below shows numbers of fans as a function of time in the spring and we can compare 5 years at Ls 195. I had the pleasure of presenting this (your!) work at the 2019 Lunar and Planetary Science conference last week in Houston, Texas.

To confirm that Type A storms are playing a significant role in the composition of the seasonal ice sheet that produces the carbon dioxide jets that bring up the dust and dirt that create the seasonal fans and blotches, we need to look at the number of seasonal fans and the area covered in MY33. We only have classifications for Seasons 1-5 of the HiRISE seasonal monitoring campaign (MY28-32). This brings me to my request: We would really like to have Planet Four measurements for MY33. We have uploaded the images, so it is ready for you to process. We would like to thank you in advance for your generosity with your time. Once those measurements are in we will be ready to write our next paper documenting these findings in a peer-reviewed scientific journal. As you know we have published one paper already and two more are in progress. This is a significant result, and we could not have done this without all of you.

Help classify the new images of Manhattan today at http://www.planetfour.org.

6 Earth Years of Planet Four

Happy Birthday Planet Four. This month marks 6 years of Planet Four. We couldn’t do any of this without the Planet Four volunteer community. Thank you for all of your help and contributions. We hope you’re celebrating with a slice of cake or a serving of Mars pie. The team is really excited for what’s to come next. We’re working hard on follow-up papers to the first fan and blotch catalog release. We’re also starting preparations to move the project to the Zooniverse’s newer Project Builder Platform. We’ll keep you posted on all of these efforts right here on the blog. Lots more to come in 2019!

Planet Four Talk at the Division for Planetary Sciences

Greetings from Knoxville, Tennessee. Earlier this morning, I presented our first catalog and early results from comparing the fan directions over two Mars years at the American Astronomical Society’s Division for Planetary Science meeting. Here’s my slides.